Mind the Gap: Trust in AI Search Summaries

Trust may not be everything, but it counts for a lot. Companies spend a lot of time and energy cultivating trust with customers, and it's a big part of brand equity. Trust gets deals closed.

Trust may not be everything, but it counts for a lot. Companies spend a lot of time and energy cultivating trust with customers, and it's a big part of brand equity. Trust gets deals closed.

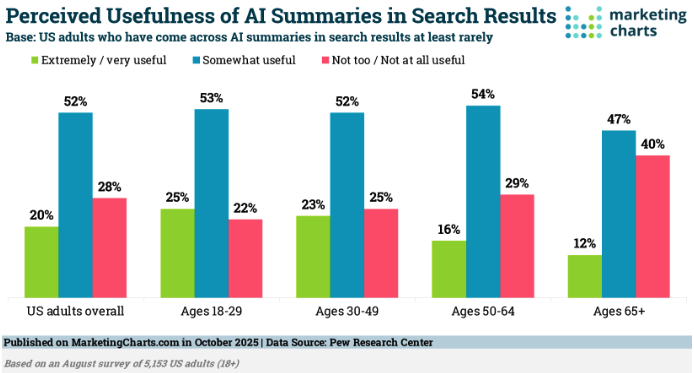

So it's interesting that, as AI-driven search result summaries have become ubiquitous, a trust gap is emerging: despite being seen as helpful to searchers, only 6% of respondents in a recent Pew Research survey have "a lot" of trust in the AI summaries browsers are presenting to them from search queries. 12% don't trust them at all. What's also interesting is that the older the respondent, the less they found the AI summaries useful.

Could part of this trust gap be due to growing awareness of AI model "hallucinations," and will disappear when tech companies improve the capabilities of their LLMs? Or maybe users just want clearer sources linked to the summaries so they can judge for themselves? I'm a "trust but verify" kind of guy. It will be interesting to see how these trust trends change over time.